|

| 4.1SC Mgmt | 4.2SLM | 4.3Capacity Mgmt | 4.4Availability Mgmt | 4.5 Continuity Mgmt | 4.6Security Mgmt | 4.7Supplier Mgmt |

| 1Introduction | 2Serv. Mgmt. | 3Principles | 4Processes | 5Tech Activities | 6Organization | 7Tech Considerations | 8Implementation | 9Challenges | Appendeces |

|

| 4.1SC Mgmt | 4.2SLM | 4.3Capacity Mgmt | 4.4Availability Mgmt | 4.5 Continuity Mgmt | 4.6Security Mgmt | 4.7Supplier Mgmt |

The success of SLM is very dependent on the quality of the Service Portfolio and the Service Catalogue and their contents, because they provide the necessary information on the services to be managed within the SLM process.

This will enable SLM to ensure that all the services and components are designed and delivered to meet their targets in terms of business needs. The SLM processes should include the:

An SLA is a written agreement between an IT service provider and the IT customer(s), defining the key service targets and responsibilities of both parties. The emphasis must be on agreement, and SLAs should not be used as a way of holding one side or the other to ransom. A true partnership should be developed between the IT service provider and the customer, so that a mutually beneficial agreement is reached - otherwise the SLA could quickly fall into disrepute and a 'blame culture' could develop that would prevent any true service quality improvements from taking place.

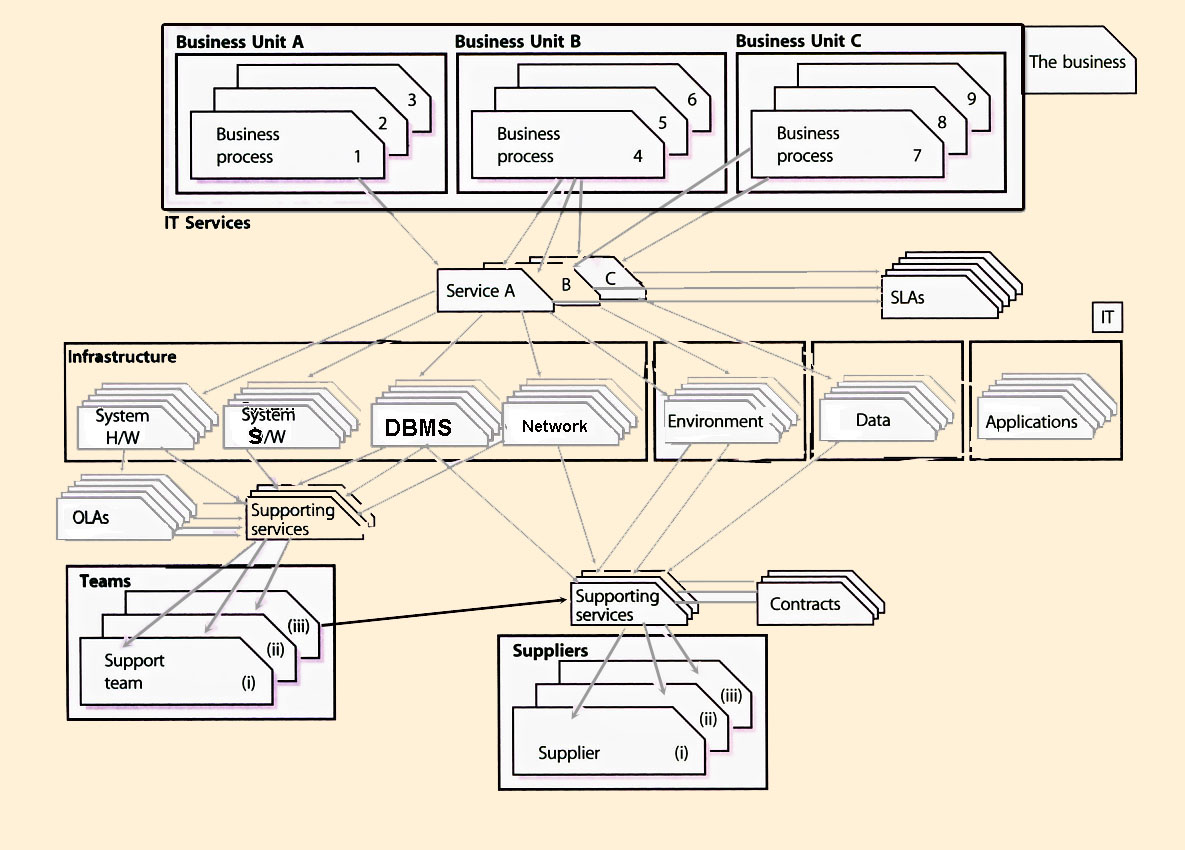

SLM is also responsible for ensuring that all targets and measures agreed in SLAs with the business are supported by appropriate underpinning OLAs or contracts, with internal support units and external partners and suppliers. This is illustrated in Figure 4.5.

Figure 4.5 shows the relationship between the business and its processes and the services, and the associated technology, supporting services, teams and suppliers required to meet their needs. It demonstrates how important the SLAs, OLAs and contracts are in defining and achieving the level of service required by the business.

An OLA is an agreement between an IT service provider and another part of the same organization that assists with the provision of services - for instance, a facilities department that maintains the air conditioning, or network support team that supports the network service. An OLA should contain targets that underpin those within an SLA to ensure that targets will not be breached by failure of the supporting activity.

|

| Figure 4.5 Service Level Management |

The key activities within the SLM process should include:

Determine, negotiate, document and agree requirements for new or changed services in SLRs, and manage and review them through the Service Lifecycle into SLAs for operational services Monitor and measure service performance achievements of all operational services against targets within SLAs Collate, measure and improve customer satisfaction Produce service reports

The interfaces between the main activities are illustrated in Figure 4.6.

|

| Figure 4.6 The Service Level Management process |

Although Figure 4.6 illustrates all the main activities of SLM as separate activities, they should be implemented as one integrated SLM process that can be consistently applied to all areas of the businesses and to all customers. These activities are described in the following sections.

Hints and tipsA combination of either of these structures might be appropriate, providing all services and customers are covered, with no overlap or duplication. |

|

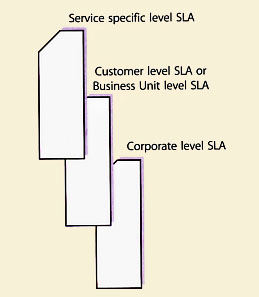

| Figure 4.7 Multi-level SLAs |

Some organizations have chosen to adopt a multi-level SLA structure. For example, a three-layer structure as follows:

As shown in Figure 4.7, such a structure allows SLAs to be kept to a manageable size, avoids unnecessary duplication, and reduces the need for frequent updates. However, it does mean that extra effort is required to maintain the necessary relationships and links within the Service Catalogue and the CMS.

Many organizations have found it valuable to produce standards and a set of proformas or templates that can be used as a starting point for all SLAs, SLRs and OLAs. The proforma can often be developed alongside the draft SLA. Guidance on the items to be included in an SLA is given in Appendix F. Developing standards and templates will ensure that all agreements are developed in a consistent manner, and this will ease their subsequent use, operation and management.

Hints and tipsMake roles and responsibilities a part of the SLA. Consider three perspectives - the IT provider, the IT customer and the actual users. |

The wording of SLAs should be clear and concise and leave no room for ambiguity. There is normally no need for agreements to be written in legal terminology, and plain language aids a common understanding. It is often helpful to have an independent person, who has not been involved with the drafting, to do a final read-through. This often throws up potential ambiguities and difficulties that can then be addressed and clarified. For this reason alone, it is recommended that all SLAs contain a glossary, defining any terms and providing clarity for any areas of ambiguity.

It is also worth remembering that SLAs may have to cover services offered internationally. In such cases the SLA may have to be translated into several languages. Remember also that an SLA drafted in a single language may have to be reviewed for suitability in several different parts of the world (i.e. a version drafted in Australia may have to be reviewed for suitability in the USA or the UK - and differences in terminology, style and culture must be taken into account).

Where the IT services are provided to another organization by an external service provider, sometimes the service targets are contained within a contract and at other times they are contained within an SLA or schedule attached to the contract. Whatever document is used, it is essential that the targets documented and agreed are clear, specific and unambiguous, as they will provide the basis of the relationship and the quality of service delivered.

It cannot be over-stressed how difficult this activity of determining the initial targets for inclusion with an SLR or SLA is. All of the other processes need to be consulted for their opinion on what are realistic targets that can be achieved, such as Incident Management on incident targets. The Capacity and Availability Management processes will be of particular value in determining appropriate service availability and performance targets. If there is any doubt, provisional targets should be included within a pilot SLA that is monitored and adjusted through a service warranty period, as illustrated in Figure 3.5.

While many organizations have to give initial priority to introducing SLAs for existing services, it is also important to establish procedures for agreeing Service Level Requirements (SLRs) for new services being developed or procured.

The SLRs should be an integral part of the Service Design criteria, of which the functional specification is a part. They should, from the very start, form part of the testing/trialing criteria as the service progresses through the stages of design and development or procurement. This SLR will gradually be refined as the service progresses through the stages of its lifecycle, until it eventually becomes a pilot SLA during the early life support period. This pilot or draft SLA should be developed alongside the service itself, and should be signed and formalized before the service is introduced into live use.

It can be difficult to draw out requirements, as the business may not know what they want - especially if not asked previously - and they may need help in understanding and defining their needs, particularly in terms of capacity, security, availability and IT service continuity. Be aware that the requirements initially expressed may not be those ultimately agreed. Several iterations of negotiations may be required before an affordable balance is struck between what is sought and what is achievable and affordable. This process may involve a redesign of the service solution each time.

If new services are to be introduced in a seamless way into the live environment, another area that requires attention is the planning and formalization of the support arrangements for the service and its components. Advice should be sought from Change Management and Configuration Management to ensure the planning is comprehensive and covers the implementation, deployment and support of the service and its components. Specific responsibilities need to be defined and added to existing contracts/OLAs, or new ones need to be agreed. The support arrangements and all escalation routes also need adding to the CMS, including the Service Catalogue where appropriate, so that the Service Desk and other support staff are aware of them. Where appropriate, initial training and familiarization for the Service Desk and other support groups and knowledge transfer should be completed before live support is needed.

It should be noted that additional support resources (i.e. more staff) may be needed to support new services. There is often an expectation that an already overworked support group can magically cope with the additional effort imposed by a new service.

Using the draft agreement as a basis, negotiations must be held with the customer(s), or customer representatives to finalize the contents of the SLA and the initial service level targets, and with the service providers to ensure that these are achievable.

AnecdoteA global network provider agreed to availability targets for the provision of a managed network service. These availability targets were agreed to at the point where the service entered the customer's premises. However, the global network provider could only monitor and measure availability at the point the connection left its' premises. The network links were provided by a number of different national telecommunications service providers, with widely varying availability levels. The result was a complete mismatch between the availability figures produced by the network provider and the customer, with correspondingly prolonged and heated debate and argument. |

Existing monitoring capabilities should be reviewed and upgraded as necessary. Ideally this should be done ahead of, or in parallel with, the drafting of SLAs, so that monitoring can be in place to assist with the validation of proposed targets.

It is essential that monitoring matches the customer's true perception of the service. Unfortunately this is often very difficult to achieve. For example, monitoring of individual components, such as the network or server, does not guarantee that the service will be available so far as the customer is concerned. The customer perceives that while a failure might affect more than one service, their concern is with the particular service which they cannot access at the time of the reported incident (though this is not always true, so caution is needed). Without monitoring all components in the end-to-end service (which may be very difficult and costly to achieve) a true picture cannot be gained. Similarly, users must be aware that they should report incidents immediately to aid diagnostics, especially if they are performance-related, so that the service provider is aware that service targets are being breached.

A considerable number of organizations use their Service Desk, linked to a comprehensive CMS, to monitor the customer's perception of availability. This may involve making specific changes to incident/problem logging screens and may require stringent compliance with incident logging procedures. All of this needs discussion and agreement with the Availability Management process.

The Service Desk is also used to monitor incident response times and resolution times, but once again the logging screen may need amendment to accommodate data capture, and call-logging procedures may need tightening and must be strictly followed. If support is being provided by a third party, this monitoring may also underpin Supplier Management.

It is essential to ensure that any incident/problem handling targets included in SLAs are the same as those included in Service Desk tools and used for escalation and monitoring purposes. Where organizations have failed to recognize this, and perhaps used defaults provided by the tool supplier, they have ended up in a situation where they are monitoring something different from that which has been agreed in the SLAs, and are therefore unable to say whether SLA targets have been met, without considerable effort to manipulate the data. Some amendments may be needed to support tools, to include the necessary fields so that relevant data can be captured.

Another notoriously difficult area to monitor is transaction response times (the time between sending a screen and receiving a response). Often end-to-end response times are technically very difficult to monitor. In such cases it may be appropriate to deal with this as follows:

This approach not only overcomes the technical difficulties of monitoring, but also ensures that incidents of poor response are reported at the time they occur. This is very important, as poor response is often caused by a number of transient interacting events that can only be detected if they are investigated immediately.

The preferred method, however, is to implement some form of automated client/server response time monitoring in close consultation with the Service Operation. Wherever possible, implement sampling or 'robot' tools and techniques to give indications of slow or poor performance. These tools provide the ability to measure or sample actual or very similar response times to those being experienced by a variety of users, and are becoming increasingly available and increasingly more cost-effective to use.

Hints and tipsSome organizations have found that, in reality, 'poor response' is sometimes a problem of user perception. The user, having become used to a particular level of response over a period of time, starts complaining as soon as this is slower. Take the view that 'if the user thinks the service is slow, then it is'. |

If the SLA includes targets for assessing and implementing Requests for Change (RFCs), then the monitoring of targets relating to Change Management should ideally be carried out using whatever Change Management tool is in use (preferably part of an integrated Service Management support tool) and change logging screens and escalation processes should support this.

From the outset, it is wise to try and manage customers' expectations. This means setting proper expectations and appropriate targets in the first place, and putting a systematic process in place to manage expectations going forward, as satisfaction = perception - expectation (where a zero or positive score indicates a satisfied customer).

SLAs are just documents, and in themselves do not materially alter the quality of service being providedR. A degree of patience is therefore needed and should be built into expectations.

Where charges are being made for the services provided, this should modify customer demandsR. Where direct charges are not made, the support of senior business managers should be enlisted to ensure that excessive or unrealistic demands are not placed on the IT provider by any individual customer group.

It is therefore recommended that attempts be made to monitor customer perception on these soft issues. Methods of doing this include:

Where possible, targets should be set for these and monitored as part of the SLAR. Ensure that if users provide feedback they receive some return, and demonstrate to them that their comments have been incorporated in an action plan, perhaps a SIP. All customer satisfaction measurements should be reviewed, and where variations are identified, they should be analyzed with action taken to rectify the variation.

Often these agreements are referred to as 'back-to-back' agreements. This is to reflect the need to ensure that all targets within underpinning or 'back-to-back' agreements are aligned with, and support, targets agreed with the business in SLAs or OLAs. There may be several layers of these underpinning or 'back-to-back' agreements with aligned targets. It is essential that the targets at each layer are aligned with, and support, the targets contained within the higher levels (i.e. those closest to the business targets).

OLAs need not be very complicated, but should set out specific back-to-back targets for support groups that underpin the targets included in SLAs. For example, if the SLA includes overall time to respond and fix targets for incidents (varying on the priority levels), then the OLAs should include targets for each of the elements in the support chain. It must be understood, however, that the incident resolution targets included in SLAs should not normally match the same targets included in contracts or OLAs with suppliers. This is because the SLA targets must include an element for all stages in the support cycle (e.g. detection time, Service Desk logging time, escalation time, referral time between groups etc, Service Desk review and closure time - as well as the actual time fixing the failure).

The SLA target should cover the time taken to answer calls, escalate incidents to technical support staff, and the time taken to start to investigate and to resolve incidents assigned to them. In addition, overall support hours should be stipulated for all groups that underpin the required service availability times in the SLA. If special procedures exist for contacted staff (e.g. out-of-hours telephone support) these must also be documented.

OLAs should be monitored against OLA and SLA targets, and reports on achievements provided as feedback to the appropriate managers of each support team. This highlights potential problem areas, which may need to be addressed internally or by a further review of the SLA or OLA. Serious consideration should be given to introducing formal OLAs for all internal support teams, which contribute to the support of operational services.

Before committing to new or revised SLAs, it is therefore important that existing contractual arrangements are investigated and, where necessary, upgraded. This is likely to incur additional costs, which must either be absorbed by IT or passed on to the customer. In the latter case, the customer must agree to this, or the more relaxed targets in existing contracts should be agreed for inclusion in SLAs. This activity needs to be completed in close consultation with the Supplier Management process, to ensure not only that SLM requirements are met, but also that all other process requirements are considered, particularly supplier and contractual policies and standards.

The SLA reporting mechanisms, intervals and report formats must be defined and agreed with the customers. The frequency and format of Service Review Meetings must also be agreed with the customers. Regular intervals are recommended, with periodic reports synchronized with the reviewing cycle.

Periodic reports must be produced and circulated to customers (or their representatives) and appropriate IT managers a few days in advance of service level reviews, so that any queries or disagreements can be resolved ahead of the review meeting. The meeting is not then diverted by such issues.

The periodic reports should incorporate details of performance against all SLA targets, together with details of any trends or specific actions being undertaken to improve service quality. A useful technique is to include a SLA Monitoring (SLAM) chart at the front of a service report to give an 'at-a-glance' overview of how achievements have measured up against targets. These are most effective if colour coded (Red, Amber, Green, and sometimes referred to as RAG charts as a result). Other interim reports may be required by IT management for OLA or internal performance reviews and/or supplier or contract management. This is likely to be an evolving process - a first effort is unlikely to be the final outcome.

The resources required to produce and verify reports should not be underestimated. It can be extremely time consuming, and if reports do not reflect the customer's own perception of service quality accurately, they can make the situation worse. It is essential that accurate information from all areas and all processes (e.g. Incident Management, Problem Management, Availability Management, Capacity Management, Change and Configuration Management) is analyzed and collated into a concise and comprehensive report on service performance, as measured against agreed business targets.

SLM should identify the specific reporting needs and automate production of these reports, as far as possible. The extent, accuracy and ease with which automated reports can be produced should form part of the selection criteria for integrated support tools. These service reports should not only include details of current performance against targets, but should also provide historic information on past performance and trends, so that the impact of improvement actions can be measured and predicted.

Actions must be placed on the customer and provider as appropriate to improve weak areas where targets are not being met. All actions must be minuted, and progress should be reviewed at the next meeting to ensure that action items are being followed up and properly implemented.

Particular attention should be focused on each breach of service level to determine exactly what caused the loss of service and what can be done to prevent any recurrence. If it is decided that the service level was, or has become, unachievable, it may be necessary to review, renegotiate, review-agree different service targets. If the service break has been caused by a failure of a third-party or internal support group, it may also be necessary to review the underpinning agreement or OLA. Analysis of the cost and impact of service breaches provides valuable input and justification of SIP activities and actions. The constant need for improvement needs to be balanced and focused on those areas most likely to give the greatest business benefit.

Reports should also be produced on the progress and success of the SIP, such as the number of SIP actions that were completed and the number of actions that delivered their expected benefit.

Hints and tips'A spy in both camps' - Service Level Managers can be viewed with a certain amount of suspicion by both the IT service provider staff and the customer representatives. This is due to the dual nature of the job, where they are acting as an unofficial customer representative when talking to IT staff, and as an IT provider representative when talking to the customers. This is usually aggravated when having to represent the 'opposition's' point of view in any meeting etc. To avoid this the Service Level Manager should be as open and helpful as possible (within the bounds of any commercial propriety) when dealing with both sides, although colleagues should never be openly criticized. |

These reviews should ensure that the services covered and the targets for each are still relevant - and that nothing significant has changed that invalidates the agreement in any way (this should include infrastructure changes, business changes, supplier changes, etc.). Where changes are made, the agreements must be updated under Change Management control to reflect the new situation. If all agreements are recorded as Cis within the CMS, it is easier to assess the impact and implement the changes in a controlled manner.

These reviews should also include the overall strategy documents, to ensure that all services and service agreements are kept in line with business and IT strategies and policies.

More information on KPIs, measurements and improvements can be found in the following section and in the Continuous Service Improvement publication.

Hints and tipsDon't fall into the trap of using percentages as the only metric. It is easy to get caught out when there is a small system with limited measurement points (i.e. a single failure in a population of 100 is only 1%; a single failure in a population of 50 is 2% - if the target is 98.5%, then the SLA is already breached). Always go for number of incidents rather than a percentage on populations of less than 100, and be careful when targets are accepted. This is something organizations have learned the hard way. |

The SLM process often generates a good starting point for a SIP - and the service review process may drive this, but all processes and all areas of the service provider organization should be involved in the SIP. Where an underlying difficulty has been identified that is adversely impacting on service quality, SLM must, in conjunction with Problem Management and Availability Management, instigate a SIP to identify and implement whatever actions are necessary to overcome the difficulties and restore service quality. SIP initiatives may also focus on such issues as user training, service and system testing and documentation. In these cases, the relevant people need to be involved and adequate feedback given to make improvements for the future. At any time, a number of separate initiatives that form part of the SIP may be running in parallel to address difficulties with a number of services.

Some organizations have established an up-front annual budget held by SLM from which SIP initiatives can be funded. This means that action can be undertaken quickly and that SLM is demonstrably effective. This practice should be encouraged and expanded to enable SLM to become increasingly proactive and predictive. The SIP needs to be owned and managed, with all improvement actions being assessed for risk and impact on services, customers and the business, and then prioritized, scheduled and implemented.

If an organization is outsourcing its Service Delivery to a third party, the issue of service improvement should be discussed at the outset and covered (and budgeted for) in the contract, otherwise there is no incentive during the lifetime of the contract for the supplier to improve service targets if they are already meeting contractual obligations and additional expenditure is needed to make the improvements.

SLM is crucial in providing information on the quality of IT service provided to the customer, and information on the customer's expectation and perception of that quality of service. This information should be widely available to all areas of the service provider organization.

If customer representatives exist who are able genuinely to represent the views of the customer community, because they frequently meet with a wide selection of customers, this is ideal. Unfortunately, all too often representatives are head-office based and seldom come into contact with genuine service customers. In the worst case, SLM may have to perform his/her own programme of discussions and meetings with customers to ensure true requirements are identified.

AnecdoteOn negotiating the current and support hours for a large service, an organization found a discrepancy in the required time of usage between Head Office and the field office's customers. Head Office (with a limited user population) wanted service hours covering 8.00 to 18.00, whereas the field office (with at least 20 times the user population) stated that starting an hour earlier would be better - but all offices closed to the public by 16.00 at the latest, and so wouldn't require a service much beyond this. Head Office won the 'political' argument, and so the 8.00 to 18.00 band was set. When the service came to be used (and hence monitored) it was found that service extensions were usually asked for by the field office to cover the extra hour in the morning, and actual usage figures showed that the service had not been accessed after 17.00, except on very rare occasions. The Service Level Manager was blamed by the IT staff for having to cover a late shift, and by the customer representative for charging for a service that was not used (i.e. staff and running costs). |

Hints and tipsCare should be taken when opening discussions on service levels for the first time, as it is likely that 'current issues' (the failure that occurred yesterday) or long-standing grievances (that old printer that we have been trying to get replaced for ages) are likely to be aired at the outset. Important though these may be, they must not be allowed to get in the way of establishing the longer-term requirements. Be aware, however, that it may be necessary to address any issues raised at the outset before gaining any credibility to progress further. |

If there has been no previous experience of SLM, then it is advisable to start with a draft SLA. A decision should be made on which service or customers are to be used for the draft. It is helpful if the selected customer is enthusiastic and wishes to participate - perhaps because they are anxious to see improvements in service quality. The results of an initial customer perception survey may give pointers to a suitable initial draft SLA.

Hints and tipsDon't pick an area where large problems exist as the first SLA. Try to pick an area that is likely to show some quick benefits and develop the SLM process. Nothing encourages acceptance of a new idea quicker than success. |

One difficulty sometimes encountered is that staff at different levels within the customer community may have different objectives and perceptions. For example, a senior manager may rarely use a service and may be more interested in issues such as value for money and output, whereas a junior member of staff may use the service throughout the day, and may be more interested in issues such as responsiveness, usability and reliability. It is important that all of the appropriate and relevant customer requirements, at all levels, are identified and incorporated in SLAs.

Some organizations have formed focus groups from different levels from within the customer community to help ensure that all issues have been correctly addressed. This takes additional resources, but can be well worth the effort.

The other group of people that has to be consulted during the whole of this process is the appropriate representatives from within the IT provider side (whether internal or from an external supplier or partner). They need to agree that targets are realistic, achievable and affordable. If they are not, further negotiations are needed until a compromise acceptable to all parties is agreed. The views of suppliers should also be sought, and any contractual implications should be taken into account during the negotiation stages.

Where no past monitored data is available, it is advisable to leave the agreement in draft format for an initial period, until monitoring can confirm that initial targets are achievable. Targets may have to be re-negotiated in some cases. Many organizations negotiate an agreed timeframe for IT to negotiate and create a baseline for establishing realistic service targets. When targets and timeframes have been confirmed, the SLAs must be signed.

Once the initial SLA has been completed, and any early difficulties overcome, then move on and gradually introduce SLAs for other services/customers. If it is decided from the outset to go for a multi-level structure, it is likely that the corporate-level issues have to be covered for all customers at the time of the initial SLA. It is also worth trialling the corporate issues during this initial phase.

Hints and tipsDon't go for easy targets at the corporate level. They may be easy to achieve, but have no value in improving service quality or credibility. Also, if the targets are set at a sufficiently high level, the corporate SLA can be used as the standard that all new services should reach. |

One point to ensure is that at the end of the drafting and negotiating process, the SLA is actually signed by the appropriate managers on the customer and IT service provider sides to the agreement. This gives a firm commitment by both parties that every attempt will be made to meet the agreement. Generally speaking, the more senior the signatories are within their respective organizations, the stronger the message of commitment. Once an SLA is agreed, wide publicity needs to be used to ensure that customers, users and IT staff alike are aware of its existence and of the key targets.

Steps must be taken to advertise the existence of the new SLAs and OLAs amongst the Service Desk and other support groups, with details of when they become operational. It may be helpful to extract key targets from these agreements into tables that can be on display in support areas, so that staff are always aware of the targets to which they are working. If support tools allow, these targets should be recorded within the tools, such as within a Service Catalogue or CMS, so that their content can be made widely available to all personnel. They should also be included as thresholds, and automatically alerted against when a target is threatened or actually breached. SLAs, OLAs and the targets they contain must also be publicized amongst the user community, so that users are aware of what they can expect from the services they use, and know at what point to start expressing dissatisfaction.

It is important that the Service Desk staff are committed to the SLM process, and become proactive ambassadors for SLAs, embracing the necessary service culture, as they are the first contact point for customers' incidents, complaints and queries. If the Service Desk staff are not fully aware of SLAs in place, and do not act on their contents, customers very quickly lose faith in SLAs.

The risks associated with regard to the provision of an accurate Service Level managementE are:

|

|