|

| 4.1SC Mgmt | 4.2SLM | 4.3Capacity Mgmt | 4.4Availability Mgmt | 4.5 Continuity Mgmt | 4.6Security Mgmt | 4.7Supplier Mgmt |

| 1Introduction | 2Serv. Mgmt. | 3Principles | 4Processes | 5Tech Activities | 6Organization | 7Tech Considerations | 8Implementation | 9Challenges | Appendeces |

|

| 4.1SC Mgmt | 4.2SLM | 4.3Capacity Mgmt | 4.4Availability Mgmt | 4.5 Continuity Mgmt | 4.6Security Mgmt | 4.7Supplier Mgmt |

The objectives of Capacity Management are to:

In general, human resource management is a line management responsibility, though the staffing of a Service Desk should use identical Capacity Management techniques. The scheduling of human resources, staffing levels, skill levels and capability levels should therefore be included within the scope of Capacity Management. The driving force for Capacity Management should be the business requirements of the organization and the planning of the resources needed to provide service levels in line with SLAs and OLAs. Capacity Management needs to understand the total IT and business environments, including:

Understanding all of this will enable Capacity Management to ensure that all the current and future capacity and performance aspects of services are provided cost effectively.

Capacity Management is also about understanding the potential for the delivery of new services. New technology needs to be understood and, if appropriate, used to innovate and deliver the services required by the customer. Capacity Management needs to recognize that the rate of technological change will probably increase and that new technology should be harnessed to ensure that the IT services continue to satisfy changing business expectations. A direct link to the Service Strategy and Service Portfolio is needed to ensure that emerging technologies are considered in future service planning.

The Capacity Management process should include:

Managing the capacity of large distributed IT infrastructures is a complex and demanding task, especially when the IT capacity and the financial investment required is ever-increasing. Therefore it makes even more sense to plan for growth. While the cost of the upgrade to an individual component in a distributed environment is usually less than the upgrade to a component in a mainframe environment, there are often many more components in the distributed environment that need to be upgraded. Also, there could now be economies of scale, because the cost per individual component could be reduced when many components need to be purchased. Capacity Management should have input to the Service Portfolio and procurement process to ensure that the best deals with suppliers are negotiated.

Capacity Management provides the necessary information on current and planned resource utilization of individual components to enable organizations to decide, with confidence:

Many of the other processes are less effective if there is no input to them from the Capacity Management process. For example:

Capacity Management is one of the forward-looking processes that, when properly carried out, can forecast business events and impacts often before they happen. Good Capacity Management ensures that there are no surprises with regard to service and component design and performance.

Capacity Management has a close, two-way relationship with the Service Strategy and planning processes within an organization. On a regular basis, the long-term strategy of an organization is encapsulated in an update of the business plans. The Service Strategy will reflect the business plans and strategy, which are developed from the organization's understanding of the external factors such as the competitive marketplace, economic outlook and legislation, and its internal capability in terms of manpower, delivery capability, etc. Often a shorter-term tactical plan, or business change plan is developed to implement the changes necessary in the short to medium term to progress the overall business plan and Service Strategy. Capacity Management needs to understand the short-, medium- and long-term plans of the business while providing information on the latest ideas, trends and technologies being developed by the suppliers of computing hardware and software.

The organization's business plans drive the specific IT Service Strategy, the contents of which Capacity Management needs to be familiar with, and to which Capacity Management needs to have had significant and ongoing input. The right level of capacity at the right time is critical. Service Strategy plans will be helpful to capacity planning by identifying the timing for acquiring and implementing new technologies, hardware and software.

Capacity Management processes and planning must be involved in all stages of the Service Lifecycle from Strategy and Design, through Transition and Operation to Improvement. From a strategic perspective, the Service Portfolio contains the IT resources and capabilities. The advent of Service Oriented Architecture, virtualization and the use of value networks in IT service provision are important factors in the management of capacity. The appropriate capacity and performance should be designed into services and components from the initial design stages. This will ensure not only that the performance of any new or changed service meets its expected targets, but also that all existing services continue to meet all of their targets. This is the basis of stable service provision.

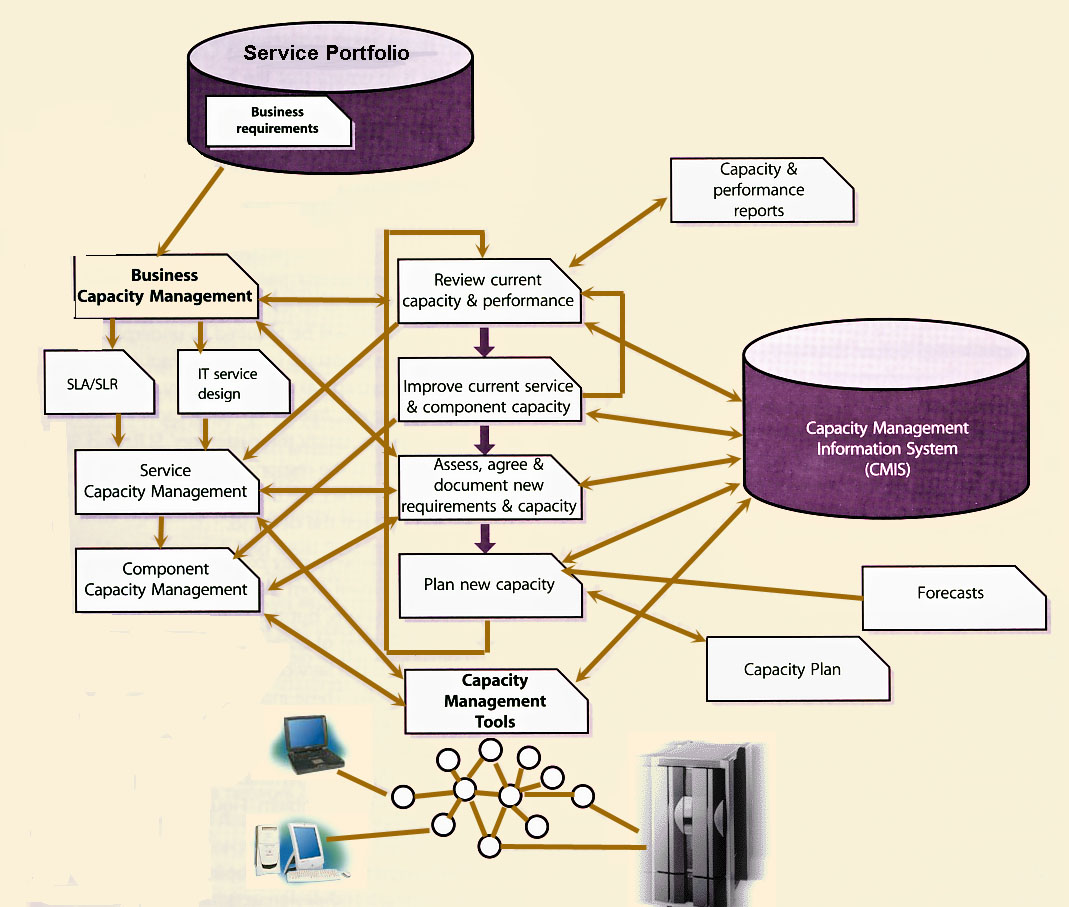

The overall Capacity Management process is continually trying cost-effectively to match IT resources and capacity to the ever-changing needs and requirements of the business. This requires the tuning and optimization of the current resources and the effective estimation and planning of the future resources, as illustrated in Figure 4.8.

Capacity Management is an extremely technical, complex and demanding process, and in order to achieve results, it requires three supporting sub-processes.

One of the key activities of Capacity Management is to produce a plan that documents the current levels of resource utilization and service performance and, after consideration of the Service Strategy and plans, forecasts the future requirements for new IT resources to support the IT services that underpin the business activities. The plan should indicate clearly any assumptions made. It should also include any recommendations quantified in terms of resource required, cost, benefits, impact, etc.

The production and maintenance of a Capacity Plan should occur at pre-defined intervals. It is, essentially, an investment plan and should therefore be published annually, in line with the business or budget lifecycle, and completed before the start of negotiations on future budgets. A quarterly re-issue of the updated plan may be necessary to take into account changes in service plans, to report on the accuracy of forecasts and to make or refine recommendations. This takes extra effort but, if it is regularly updated, the Capacity Plan is more likely to be accurate and to reflect the changing business need.

The typical contents of a Capacity Plan are described in Appendix J.

|

| Figure 4.8 The Capacity Management process |

This sub-process translates business needs and plans into requirements for service and IT infrastructure, ensuring that the future business requirements for IT services are quantified, designed, planned and implemented in a timely fashion. This can be achieved by using the existing data on the current resource utilization by the various services and resources to trend, forecast, model or predict future requirements. These future requirements come from the Service Strategy and Service Portfolio detailing new processes and service requirements, changes, improvements, and also the growth in the existing services.

There are many similar activities that are performed by each of the above sub-processes, but each sub-process has a very different focus. Business Capacity Management is focused on the current and future business requirements, while Service Capacity Management is focused on the delivery of the existing services that support the business, and Component Capacity Management is focused on the IT infrastructure that underpins service provision. The role that each of these sub-processes plays in the overall process and the use of management tools is illustrated in Figure 4.9.

|

| Figure 4.9 Capacity Management Sub-processes |

The tools used by Capacity Management need to conform to the organization's management architecture and integrate with other tools used for the management of IT systems and automating IT processes. The monitoring and control activities within Service Operation will provide a good basis for the tools to support and analyse information for Capacity Management.

The reactive activities of Capacity Management should include:

Key messageThe more successful the proactive and predictive activities of Capacity Management, the less need there will be for the reactive activities of Capacity Management. |

4.3.5.1 Business Capacity Management

|

| Figure 4.10 Capacity must support business requirements |

The main objective of the Business Capacity Management sub-process is to ensure that the future business requirements (customer outcomes) for IT services are considered and understood, and that sufficient IT capacity to support any new or changed services is planned and implemented within an appropriate timescale. Figure 4.10 illustrates that BCM is influenced by the business patterns of activity and how services are used.

The Capacity Management process must be responsive to changing requirements for capacity demand. New services or changed services will be required to underpin the changing business. Existing services will require modification to provide extra functionality. Old services will become obsolete, freeing up spare capacity. As a result, the ability to satisfy the customers' SLRs and SLAs will be affected. It is the responsibility of Capacity Management to predict the demand for capacity for such changes and manage the demand.

These new requirements may come to the attention of Capacity Management from many different sources and for many different reasons, but the principal sources of supply should be the Pattern of Business Activity from Demand Management and the Service Level Packages produced for the Service Portfolio. These indicate a window of future predictors for capacity. Such examples could be a recommendation to upgrade to take advantage of new technology, or the implementation of a tuning activity to resolve a performance problem. Figure 4.11 shows the cycle of demand management.

|

| Figure 4.11 Capacity Management takes particular note of demand pattern |

Capacity Management needs to be included in all strategic, planning and design activities, being involved as early as possible within each process, such as:

Key messageCapacity Management should not be a last-minute 'tick in the box' just prior to customer acceptance and operational acceptance. |

If early involvement can be achieved from Capacity Management within these processes, then the planning and design of IT capacity can be closely aligned with business requirements and can ensure that service targets can be achieved and maintained.

Assist with Agreeing on Service Level Requirements

Capacity Management should assist SLM in understanding the customers' capacity and performance requirements, in terms of required service/system response times, expected throughput, patterns of usage and volume of users. Capacity Management should help in the negotiation process by providing possible solutions to a number of scenarios. For example, if the volume of users is less than 2,000, then response times can be guaranteed to be less than two seconds. If more than 2,000 users connect concurrently, then extra network bandwidth is needed to guarantee the required response time, or a slower response time will have to be accepted. Modeling, trending or application sizing techniques are often employed here to ensure that predictions accurately reflect the real situation.

Design, Procure or Amend Service Configuration

Capacity Management should be involved in the design of new or changing services and make recommendations for the procurement of hardware and software, where

performance and/or capacity are factors. In some instances Capacity Management instigates the implementation of the new requirement through Change Management, where it is also involved as a member of the Change Advisory Board. In the interest of balancing cost and capacity, the Capacity Management process obtains the costs of alternative proposed solutions and recommends the most appropriate cost-effective solution.

Verify SLA

The SLA should include details of the anticipated service throughputs and the performance requirements. Capacity Management advises SLM on achievable targets that can be monitored and on which the Service Design has been based. Confidence that the Service Design will meet the SLRs and provide the ability for future growth can be gained by using modelling, trending or sizing techniques.

Support SLA Negotiation

The results of the predictive techniques provide the verification of service performance capabilities. There may be a need for SLM to renegotiate SLAs based on these findings. Capacity Management provides support to SLM should renegotiations be necessary, by recommending potential solutions and associated cost information. Once assured that the requirements are achievable, it is the responsibility of SLM to agree the service levels and sign the SLA.

Control and Implementation

All changes to service and resource capacity must follow all IT processes such as Change, Release, Configuration and Project Management to ensure that the right degree of control and coordination is in place on all changes and that any new or change components are recorded and tracked through their lifecycle.

4.3.5.2 Service Capacity Management

The main objective of the Service Capacity Management sub-process is to identify and understand the IT services, their use of resource, working patterns, peaks and troughs, and to ensure that the services meet their SLA targets, i.e. to ensure that the IT services perform as required. In this sub-process, the focus is on managing service performance, as determined by the targets contained in the agreed SLAs or SLRs.

The Service Capacity Management sub-process ensures that the services meet the agreed capacity service targets. The monitored service provides data that can identify trends from which normal service levels can be established. By regular monitoring and comparison with these levels, exception conditions can be defined, identified and reported on. Therefore Capacity Management informs SLM of any service breaches or near misses.

There will be occasions when incidents and problems are referred to Capacity Management from other processes, or it is identified that a service could fail to meet its SLA targets. On some of these occasions, the cause of the potential failure may not be resolved by Component Capacity Management. For example, when the failure is analyzed it may be found that there is no lack of capacity, or no individual component is over-utilized. However, if the design or coding of an application is inefficient, then the service performance may need to be managed, as well as individual hardware or software resources. Service Capacity Management should also be monitoring service workloads and transactions to ensure that they remain within agreed limitations and thresholds.

The key to successful Service Capacity Management is to forecast issues, wherever possible, by monitoring changes in performance and monitoring the impact of changes. So this is another sub-process that has to be proactive and predictive, even pre-emptive, rather than reactive. However, there are times when it has to react to specific performance problems. From a knowledge and understanding of the performance requirements of each of the services being used, the effects of changes in the use of services can be estimated, and actions taken to ensure that the required service performance can be achieved.

4.3.5.3 Component Capacity Management

The main objective of Component Capacity Management (CCM) is to identify and understand the performance, capacity and utilization of each of the individual components within the technology used to support the IT

services, including the infrastructure, environment, data and applications. This ensures the optimum use of the current hardware and software resources in order to achieve and maintain the agreed service levels. All hardware components and many software components in the IT infrastructure have a finite capacity that, when approached or exceeded, has the potential to cause performance problems.

This sub-process is concerned with components such as processors, memory, disks, network bandwidth, network connections etc. So information on resource utilization needs to be collected on a continuous basis. Monitors should be installed on the individual hardware and software components, and then configured to collect the necessary data, which is accumulated and stored over a period of time. This is an activity generally carried out through monitoring and control within Service Operation. A direct feedback to CCM should be applied within this sub-process.

As in Service Capacity Management, the key to successful CCM is to forecast issues, wherever possible, and it therefore has to be proactive and predictive as well. However, there are times when CCM has to react to specific problems that are caused by a lack of capacity, or the inefficient use of the component. From a knowledge and understanding of the use of resource by each of the services being run, the effects of changes in the use of services can be estimated and hardware or software upgrades can be budgeted and planned. Alternatively, services can be balanced across the existing resources to make most effective use of the current resources.

|

| Figure 4.12 Iterative ongoing activities of Capacity Management |

The activities described in this section are necessary to support the sub-processes of Capacity Management, and these activities can be done both reactively or proactively, or even pre-emptively.

The major difference between the sub-processes is in the data that is being monitored and collected, and the perspective from which it is analyzed. For example, the level of utilization of individual components in the infrastructure - such as processors, disks, and network links - is of interest in Component Capacity Management, while the transaction throughput rates and response times are of interest in Service Capacity Management. For Business Capacity Management, the transaction throughput rates for the online service need to be translated into business volumes - for example, in terms of sales invoices raised or orders taken. The biggest challenge facing Capacity Management is to understand the relationship between the demands and requirements of the business and the business workload, and to be able to translate these in terms of the impact and effect of these on the service and resource workloads and utilizations, so that appropriate thresholds can be set at each level.

Tuning and Optimization Activities

A number of the activities need to be carried out iteratively and form a natural cycle, as illustrated in Figure 4.12.

These activities provide the basic historical information and triggers necessary for all of the other activities and processes within Capacity Management. Monitors should be established on all the components and for each of the services. The data should be analyzed using, wherever possible, expert systems to compare usage levels against thresholds. The results of the analysis should be included in reports, and recommendations made as appropriate. Some form of control mechanism may then be put in place to act on the recommendations. This may take the form of balancing services, balancing workloads, changing concurrency levels and adding or removing resources. All of the information accumulated during these activities should be stored in the Capacity Management Information System (CMIS) and the cycle then begins again, monitoring any changes made to ensure they have had a beneficial effect and collecting more data for future actions.

Utilization Monitoring

The monitors should be specific to particular operating systems, hardware configurations, applications, etc. It is

important that the monitors can collect all the data required by the Capacity Management process, for a

specific component or service. Typical monitored data includes:

In considering the data that needs to be included, a distinction needs to be drawn between the data collected to monitor capacity (e.g. throughput) and the data to monitor performance (e.g. response times). Data of both types is required by the Service and Component Capacity Management sub-processes. This monitoring and collection needs to incorporate all components in the service, thus monitoring the 'end-to-end' customer experience. The data should be gathered at total resource utilization level and at a more detailed profile for the load that each service places on each particular component. This needs to be carried out across the whole technology, host or server, the network, local server and client or workstation. Similarly the data needs to be collected for each service.

Part of the monitoring activity should be of thresholds and baselines or profiles of the normal operating levels. If these are exceeded, alarms should be raised and exception reports produced. These thresholds and baselines should have been determined from the analysis of previously recorded data, and can be set at both the component and service level. All thresholds should be set below the level at which the component or service is over-utilized, or below the targets in the SLAs. When the threshold is reached or threatened, there is still an opportunity to take corrective action before the SLA has been breached, or the resource has become over-utilized and there has been a period of poor performance. The monitoring and management of these events, thresholds and alarms is covered in detail in the Service Operation publication.

Often it is more difficult to get the data on the current business volumes as required by the Business Capacity Management sub-process. These statistics may need to be derived from the data available to the Service and Component Capacity Management sub-processes.

Response Time Monitoring

Many SLAs have user response times as one of the targets to be measured, but equally many organizations have great difficulty in supporting this requirement. User response times of IT and network services can be monitored and measured by the following:

In some cases, a combination of a number of systems may be used. The monitoring of response times is a complex process even if it is an in-house service running on a private network. If this is an external internet service, the process is much more complex because of the sheer number of different organizations and technologies involved.

AnecdoteA private company with a major website implemented a website monitoring service from an external supplier that would provide automatic alarms on the availability and responsiveness of their website. The availability and speed of the monitoring points were lower than those of the website being monitored. Therefore the figures produced by the service were of the availability and responsiveness of the monitoring service itself, rather than those of the monitored website. |

Hints and tipsWhen implementing external monitoring services, ensure that the service levels and performance commitments of the monitoring service are in excess of those of the service(s) being monitored. |

Analysis

The data collected from the monitoring should be analyzed to identify trends from which the normal utilization and service levels, or baselines, can be established. By regular monitoring and comparison with this baseline, exception conditions in the utilization of individual components or service thresholds can be defined, and breaches or near misses in the SLAs can be reported and actioned. Also the data can be used to predict future resource usage, or to monitor actual business growth against predicted growth.

Analysis of the data may identify issues such as:

The use of each component and service needs to be considered over the short, medium and long term, and the minimum, maximum and average utilization for these periods recorded. Typically, the short-term pattern covers the utilization over a 24-hour period, while the medium term may cover a one- to four-week period, and the long term a year-long period. Over time, the trend in the use of the resource by the various IT services will become apparent. The usefulness of this information is further enhanced by recording any observed contributing factors to peaks or valleys in utilization - for example, if a change of business process or staffing coincides with any deviations from the normal utilization.

It is important to understand the utilization in each of these periods, so that changes in the use of any service can be related to predicted changes in the level of utilization of individual components. The ability to identify the specific hardware or software components on which a particular IT service depends is improved greatly by an accurate, up-to-date and comprehensive CMS.

When the utilization of a particular resource is considered, it is important to understand both the total level of utilization and the utilization by individual services of the resource.

ExampleIf a processor that is 75% loaded during the peak hour is being used by two different services, A and B, it is important to know how much of the total 75% is being used by each service. Assuming the system overhead on the processor is 5%, the remaining 70% load could be split evenly between the two services. If a change in either Service A or Service B is estimated to double its loading on the processor, then the processor would be overloaded. However, if service A uses 60% and Service B uses 10% of the processor, then the processor would be overloaded if service A doubled its loading on the processor. But if service B doubled its loading on the processor, then the processor would not necessarily be overloaded. |

Tuning

The analysis of the monitored data may identify areas of the configuration that could be tuned to better utilize the service, system and component resources or improve the performance of the particular service.

Tuning techniques that are of assistance include:

Before implementing any of the recommendations arising from the tuning techniques, it may be appropriate to consider testing the validity of the recommendation. For example, 'Can Demand Management be used to avoid the need to carry out any tuning?' or 'Can the proposed change be modeled to show its effectiveness before it is implemented?'

Implementation

The objective of this activity is to introduce to the live operation services any changes that have been identified by the monitoring, analysis and tuning activities. The implementation of any changes arising from these activities must be undertaken through a strict, formal Change Management process. The impact of system tuning changes can have major implications on the customers of the service. The impact and risk associated with these types of changes are likely to be greater than that of other different type of changes.

It is important that further monitoring takes place, so that the effects of the change can be assessed. It may be necessary to make further changes or to regress some of the original changes.

Exploitation of New Technology

This involves understanding new techniques and new technology and how they can be used to support the business and innovate improvements. It may be appropriate to introduce new technology to improve the provision and support of the IT services on which the organization is dependent. This information can be gathered by studying professional literature (magazine and press articles) and by attending:

Each of these provides sources of information relating to potential techniques, technology, hardware and software, which might be advantageous for IT to implement to realize business benefits. However, at all times Capacity Management should recognize that the introduction and use of this new technology must be cost-justified and deliver real benefit to the business. It is not just the new technology itself that is important, but Capacity Management should also keep aware of the advantages to be obtained from the use of new technologies, using techniques such as 'grid computing', 'virtualization' and 'on-demand computing'.

Designing Resilience

Capacity Management assists with the identification and improvement of the resilience within the IT infrastructure or any subset of it, wherever it is cost-justified. In conjunction with Availability Management, Capacity Management should use techniques such as Component Failure Impact Analysis (CFIA, as described in section 4.4 on Availability Management) to identify how susceptible the current configuration is to the failure or overload of individual components and make recommendations on any cost-effective solutions.

Capacity Management should be able to identify the impact on the available resources of particular failures, and the potential for running the most important services on the remaining resources. So the provision of spare capacity can act as resilience or fail-over in failure situations.

The requirements for resilience in the IT infrastructure should always be considered at the time of the service or system design. However, for many services, the resilience of the service is only considered after it is in live operational use. Incorporating resilience into Service Design is much more effective and efficient than trying to add it at a later date, once a service has become operational.

4.3.5.5 Threshold Management and Control

The technical limits and constraints on the individual services and components can be used by the monitoring activities to set the thresholds at which warnings and alarms are raised and exception reports are produced. However, care must be exercised when setting thresholds, because many thresholds are dependent on the work being run on the particular component.

The management and control of service and component thresholds is fundamental to the effective delivery of services to meet their agreed service levels. It ensures that all service and component thresholds are maintained at the appropriate levels and are continuously, automatically monitored, and alerts and warnings generated when breaches occur. Whenever monitored thresholds are breached or threatened, then alarms are raised and breaches, warnings and exception reports are produced. Analysis of the situation should then be completed and remedial action taken whenever justified, ensuring that the situation does not recur. The same data items can be used to identify when SLAs are breached or likely to be breached or when component performance degrades or is likely to be degraded. By setting thresholds below or above the actual targets, action can be taken and a breach of the SLA targets avoided. Threshold monitoring should not only alarm on exceeding a threshold, but should also monitor the rate of change and predict when the threshold will be reached. For example, a disk-space monitor should monitor the rate of growth and raise an alarm when the current rate will cause the disk to be full within the next N days. If a 1GB disk has reached 90% capacity, and is growing at 100KB per day, it will be 1,000 days before it is full. If it is growing at 10MB per day, it will only be 10 days before it is full. The monitoring and management of these events and alarms is covered in detail in the Service Operations publication.

There may be occasions when optimization of infrastructure components and resources is needed to maintain or improve performance or throughput. This can often be done through Workload Management, which is a generic term to cover such actions as:

It will only be possible to manage workloads effectively if a good understanding exists of which workloads will run at what time and how much resource utilization each workload places on the IT infrastructure. Diligent monitoring and analysis of workloads, together with a comprehensive CMIS, are therefore needed on an ongoing operational basis.

4.3.5.6 Demand Management

The prime objective of Demand Management is to influence user and customer demand for IT services and manage the impact on IT resources.

This activity can be carried out as a short-term requirement because there is insufficient current capacity to support the work being run, or, as a deliberate policy of IT management, to limit the required capacity in the long term.

Short-term Demand Management may occur when there has been a partial failure of a critical resource in the IT infrastructure. For example, if there has been a failure of a processor within a multi-processor server, it may not be possible to run the full range of services. However, a limited subset of the services could be run. Capacity Management should be aware of the business priority of each of the services, know the resource requirements of each service (in this case, the amount of processor power required to run the service) and then be able to identify which services can be run while there is a limited amount of processor power available.

Long-term Demand Management may be required when it is difficult to cost-justify an expensive upgrade. For example, many processors are heavily utilized for only a few hours each day, typically 10:00am-12:00am and 2:00pm-4:00pm. Within these periods, the processor may be overloaded for only one or two hours. For the hours between 6:00pm and 08:00am, these processors are only very lightly loaded and the components are under-utilized. Is it possible to justify the cost of an upgrade to provide additional capacity for only a few hours in 24 hours? Or is it possible to influence the demand and spread the requirement for resource across 24 hours, thereby delaying or avoiding altogether the need for a costly upgrade?

Demand Management needs to understand which services are utilizing the resource and to what level, and the schedule of when they must be run. Then a decision can be made on whether it will be possible to influence the use of resource and, if so, which option is appropriate.

The influence on the services that are running could be exercised by:

4.3.5.7 Modeling and Trending

A prime objective of Capacity Management is to predict the behaviour of IT services under a given volume and variety of work. Modeling is an activity that can be used to beneficial effect in any of the sub-processes of Capacity Management.

The different types of modeling range from making estimates based on experience and current resource utilization information, to pilot studies, prototypes and full-scale benchmarks. The former is a cheap and reasonable approach for day-to-day small decisions, while the latter is expensive, but may be advisable when implementing a large new project or service. With all types of modeling, similar levels of accuracy can be obtained, but all are totally dependent on the skill of the person constructing the model and the information used to create it.

Baselining

The first stage in modeling is to create a baseline model that reflects accurately the performance that is being achieved. When this baseline model has been created, predictive modeling can be done, i.e. ask the 'What if?' questions that reflect failures, planned changes to the hardware and/or the volume/variety of workloads. If the baseline model is accurate, then the accuracy of the result of the potential failures and changes can be trusted. Effective Capacity Management, together with modeling techniques, enables Capacity Management to answer the 'What if?' questions. What if the throughput of Service A doubles? What if Service B is moved from the current server onto a new server - what will be the effect on the response times of the two services?

Trend Analysis

Trend analysis can be done on the resource utilization and service performance information that has been collected by the Capacity Management process. The data can be analyzed in a spreadsheet, and the graphical and trending and forecasting facilities used to show the utilization of a particular resource over a previous period of time, and how it can be expected to change in the future.

Typically, trend analysis only provides estimates of future resource utilization information. Trend analysis is less effective in producing an accurate estimate of response times, in which case either analytical or simulation modeling should be used. Trend analysis is most effective when there is a linear relationship between a small number of variables, and less effective when there are non-linear relationships between variables or when there are many variables.

Analytical Modeling

Analytical models are representations of the behaviour of computer systems using mathematical techniques, e.g. multi-class network queuing theory. Typically, a model is built using a software package on a PC, by specifying within the package the components and structure of the configuration that needs to be modeled, and the utilization of the components, e.g. processor, memory and disks, by the various workloads or applications. When the model is run, the queuing theory is used to calculate the response times in the computer system. If the response times predicted by the model are sufficiently close to the response times recorded in real life, the model can be regarded as an accurate representation of the computer system.

The technique of analytical modeling requires less time and effort than simulation modeling, but typically it gives less accurate results. Also, the model must be kept up-to-date. However, if the results are within 5% accuracy for utilization, and 15-20% for online application response times, the results are usually satisfactory.

Simulation Modeling

Simulation involves the modeling of discrete events, e.g. transaction arrival rates, against a given hardware configuration. This type of modeling can be very accurate in sizing new applications or predicting the effects of changes on existing applications, but can also be very time-consuming and therefore costly.

When simulating transaction arrival rates, have a number of staff enter a series of transactions from prepared scripts, or use software to input the same scripted transactions with a random arrival rate. Either of these approaches takes time and effort to prepare and run. However, it can be cost-justified for organizations with very large services and systems where the major cost and the associated performance implications assume great importance.

4.3.5.8 Application Sizing

Application sizing has a finite lifespan. It is initiated at the design stage for a new service, or when there is a major change to an existing service, and is completed when the application is accepted into the live operational environment. Sizing activities should include all areas of technology related to the applications, and not just the applications themselves. This should include the infrastructure, environment and data, and will often use modeling and trending techniques.

The primary objective of application sizing is to estimate the resource requirements to support a proposed change to an existing service or the implementation of a new service, to ensure that it meets its required service levels. To achieve this, application sizing has to be an integral part of the Service Lifecycle.

During the initial requirements and design, the required service levels must be specified in an SLR. This enables the Service Design and development to employ the pertinent technologies and products to achieve a design that meets the desired levels of service. It is much easier and less expensive to achieve the required service levels if Service Design considers the required service levels at the very beginning of the Service Lifecycle, rather than at some later stage.

Other considerations in application sizing are the resilience aspects that it may be necessary to build into the design of new services. Capacity Management is able to provide advice and guidance to the Availability Management process on the resources required to provide the required level of performance and resilience.

The sizing of the application should be refined as the design and development process progresses. The use of modeling can be used within the application sizing process.

The SLRs of the planned application developments should not be considered in isolation. The resources to be utilized by the application are likely to be shared with other services, and potential threats to existing SLA targets must be recognized and managed.

When purchasing software packages from external suppliers, it is just as important to understand the resource requirements needed to support the service. Often it can be difficult to obtain this information from the suppliers and it may vary, depending on throughput. Therefore, it is beneficial to identify similar customers of the product and to gain an understanding of the resource implications from them. It may be pertinent to benchmark, evaluate or trial the product prior to purchase.

Key message |

Some aspects of service quality can be improved after implementation (additional hardware can be added to improve performance, for example). Others - particularly aspects such as reliability and maintainability of applications software - rely on quality being 'built in', since to attempt to add it at a later stage is, in effect, redesign and redevelopment, normally at a much higher cost than the original development. Even in the hardware example quoted above, it is likely to cost more to add additional capacity after service implementation rather than as part of the original project.

The information provided is as follows:

4.3.8 Information Management

The aim of the CMIS is to provide the relevant capacity and performance information to produce reports and support the Capacity Management process. These reports provide valuable information to many IT and Service Management processes. These reports should include the following.

Component-based Reports

For each component there should be a team of technical staff responsible for its control and management. Reports must be produced to illustrate how components are

performing and how much of their maximum capacity is being used.

Service-Based Reports

Reports and information must also be produced to illustrate how the service and its constituent components are performing with respect to their overall service targets and constraints. These reports will provide the basis of SLM and customer service reports.

Exception Reports

Reports that show management and technical staff when the capacity and performance of a particular component or service becomes unacceptable are also a required from analysis of capacity data. Thresholds can be set for any component, service or measurement within the CMIS. An example threshold may be that processor percentage utilization for a particular server has breached 70% for three consecutive hours, or that the concurrent number of logged-in users exceeds the agreed limit.

In particular, exception reports are of interest to the SLM process in determining whether the targets in SLAs have been breached. Also the Incident and Problem Management processes may be able to use the exception reports in the resolution of incidents and problems.

Predictive and Forecast Reports

To ensure the IT service provider continues to provide the required service levels, the Capacity Management process must predict future workloads and growth. To do this, future component and service capacity and performance must be forecast. This can be done in a variety of ways, depending on the techniques and the technology used. Changes to workloads by the development and implementation of new functionality and services must be considered alongside growth in the current functionality and services driven by business growth. A simple example of a capacity forecast is a correlation between a business driver and a component utilization, e.g. processor utilization against the number of customer accounts. This data can be correlated to find the effect that an increase in the number of customer accounts will have on the processor utilization. If the forecasts on future capacity requirements identify a requirement for increased resource, this requirement needs to be input into the Capacity Plan and included within the IT budget cycle.

Often capacity reports are consolidated together and stored on an intranet site so that anyone can access and refer to them.

The Capacity Management Information System (CMIS) is the cornerstone of a successful Capacity Management process. Information contained within the CMIS is stored and analyzed by all the sub-processes of Capacity Management because it is a repository that holds a number of different types of data, including business, service, resource or utilization and financial data, from all areas of technology.

However, the CMIS is unlikely to be a single database, and probably exists in several physical locations. Data from all areas of technology, and all components that make up the IT services, can then be combined for analysis and provision of technical and management reporting. Only when all of the information is integrated can 'end-to-end' service reports be produced. The integrity and accuracy of the data within the CMIS needs to be carefully managed. If the CMIS is not part of an overall CMS or SKMS, then links between these systems need to be implemented to ensure consistency and accuracy of the information recorded within them.

The information in the CMIS is used to form the basis of performance and Capacity Management reports and views that are to be delivered to customers, IT management and technical personnel. Also, the data is utilized to generate future capacity forecasts and allow Capacity Management to plan for future capacity requirements. Often a web interface is provided to the CMIS to provide the different access and views required outside of the Capacity Management process itself.

The full range of data types stored within the CMIS is as follows.

Business Data

It is essential to have quality information on the current and future needs of the business. The future business plans of the organization need to be considered and the effects on the IT services understood. The business data is used to forecast and validate how changes in business drivers affect the capacity and performance of the IT infrastructure. Business data should include business

transactions or measurements such as the number of accounts, the number of invoices generated, the number of product lines.

Service Data

To achieve a service-orientated approach to Capacity Management, service data should be stored within the CMIS. Typical service data are transaction response times, transaction rates, workload volumes, etc. In general, the SLAs and SLRs provide the service targets for which the Capacity Management process needs to record and monitor data. To ensure that the targets in the SLAs are achieved, SLM thresholds should be included, so that the monitoring activity can measure against these service thresholds and raise exception warnings and reports before service targets are breached.

Component Utilization Data

The CMIS also needs to record resource data consisting of utilization, threshold and limit information on all of the technological components supporting the services. Most of the IT components have limitations on the level to which they should be utilized. Beyond this level of utilization, the resource will be over-utilized and the performance of the services using the resource will be impaired. For example, the maximum recommended level of utilization on a processor could be 80%, or the utilization of a shared Ethernet LAN segment should not exceed 40%.

Also, components have various physical limitations beyond which greater connectivity or use is impossible. For example, the maximum number of connections through an application or a network gateway is 100, or a particular type of disk has a physical capacity of 15Gb. The CMIS should therefore contain, for each component and the maximum performance and capacity limits, current and past utilization rates and the associated component thresholds. Over time this can require vast amounts of data to be accumulated, so there need to be good techniques for analyzing, aggregating and archiving this data.

Financial Data

The Capacity Management process requires financial data. For evaluating alternative upgrade options, when proposing various scenarios in the Capacity Plan, the financial cost of the upgrades to the components of the IT infrastructure, together with information about the current IT hardware budget, must be known and included in the considerations. Most of this data may be available from the Financial Management for IT services process, but Capacity Management needs to consider this information when managing the future business requirements.

Another challenge is the combination of all of the Component Capacity Management (CCM) data into an integrated set of information that can be analyzed in a consistent manner to provide details of the usage of all components of the services. This is particularly challenging when the information from the different technologies is provided by different tools in differing formats. Often the quality of component information on the performance of the technology is variable in both its quality and accuracy.

The amounts of information produced by BCM, and especially SCM and CCM, are huge and the analysis of this information is difficult to achieve. The people and the processes need to focus on the key resources and their usage, whilst not ignoring other areas. In order to do this, appropriate thresholds must be used, and reliance placed on the tools and technology to automatically manage the technology and provide warnings and alerts when things deviate significantly from the 'norm'.

The main CSFs for the Capacity Management process are:

Some of the major risks associated with Capacity Management include:

|

|